The AI Landscape

Local, open, closed & what it means for you.

Local Models Are Coming to Your Laptop

Cheaper Hardware

Getting cheaper and easier to run on smaller hardware every month.

Apple MLX Chips

Apple ships dedicated machine learning chips in every laptop.

Privacy Benefit

Your data never leaves your device. Sensitive documents analyzed locally.

Becoming Normal

Running models locally is becoming standard, not niche.

Less RAM, Same Quality

Google researchers demonstrated techniques to run frontier-quality models with significantly less memory - making local AI more accessible on standard hardware.

Source: Google Gemma QAT - bringing AI to consumer GPUs

Perspective: “Local = Worse” is Outdated

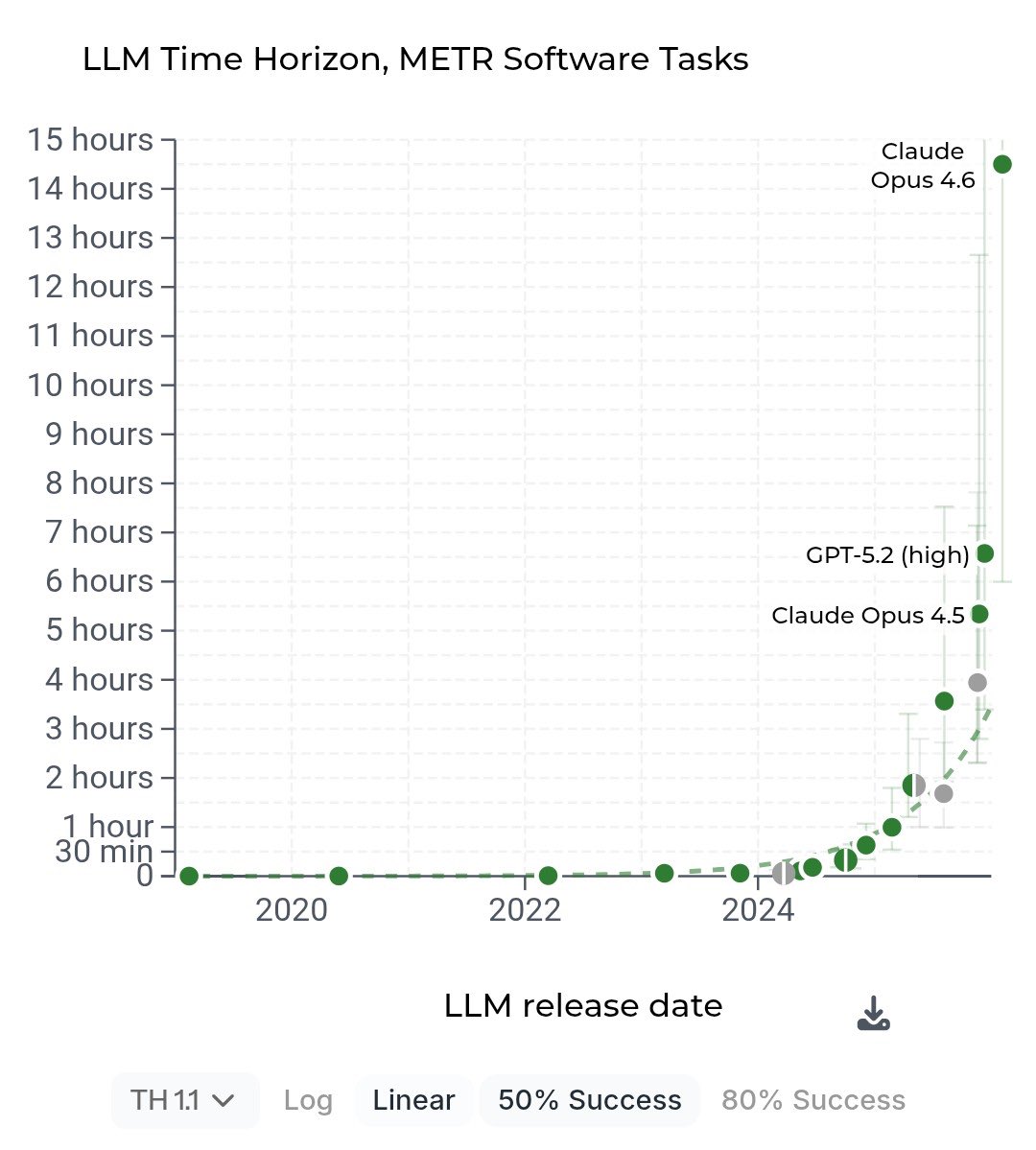

Today's local models running on a laptop outperform the original ChatGPT (GPT-3.5, November 2022) that started the entire revolution. The model that amazed the world 3 years ago is now surpassed by software running on your MacBook - offline, private, free. Progress is exponential.

Closed Source vs Open Source

| Closed Source | Open Source | |

|---|---|---|

| Examples | Anthropic (Claude), OpenAI (ChatGPT) | Meta (Llama), DeepSeek, Qwen |

| Price | Subscription / API fees | Free (self-host or cheap API) |

| Customization | Limited to provider's options | Full control, fine-tunable |

| Guardrails | Built-in, polished | Fewer restrictions |

| Privacy | Data goes to provider | Runs on your infrastructure |

Trade-off: convenience & safety vs control & cost.

Geopolitical angle: China open-sourcing powerful models changes the playing field entirely. Llama 4 Scout has a 10M token context window - open source, free.

Let's wrap it up - what does all of this mean for you?

Key takeaways →